4,000 People Fired for AI. I Have Thoughts.

What Block got right, what they got wrong, and what leaders need to know.

Welcome to the 103 new FullStack HR readers who joined last week - welcome all to this publication. And hello, if you got sent this link - if you aren't yet subscribed, join the other like-minded people in this free newsletter by subscribing below:

Happy Tuesday,

I had another article written for today, but then on the plane to Norway, where I’m about to work with a bunch of people on AI and leadership, I read and listened to a bunch of articles about Block and one thing led to another.

I’ve been keeping a close eye on Block for a year and as always, there’s usually more to the story than AI or not AI causing layoffs.

But we’re stuck in this polarized space where one side says AI is just hype and overblown and everything will be fine, and the other side says AI is going to take it all and it’s already happening. And the truth, as always, is somewhere in the messy, uncomfortable middle. But that middle ground doesn’t get clicks, doesn’t go viral, and doesn’t sell anything.

So maybe I’m shooting myself in the foot here, but who cares.

I did a video about it all. This is a video turned into an article with Claude's help. You can also listen to the article at Spotify.

We’ve all seen the headlines by now. Block lays off nearly half of its staff because of AI, and its CEO says that most companies will eventually do the same.

It’s one headline among many that came out of the Block layoff, and I’ve been sitting with this for a while now, not quite sleeping on it, but quietly reading what people have written, processing it, and trying to figure out where I actually land on all of this.

And here’s my take, because I genuinely don’t think this is as simple as the internet is trying to make it. On one side we have people saying this is hyperbolic, overhyped, and that none of this actually has anything to do with AI.

And on the other side we have people saying this IS AI and it’s coming for all of us, that this is just the beginning. The truth, as it usually does, lies somewhere in between those two extremes, and I think it’s worth unpacking what’s actually going on here because the nuance matters more than most people seem willing to admit.

What actually happened

Let’s start with the facts as we know them. Block laid off roughly 4,000 employees in a single day, which represents about a 40% cut of the workforce.

Following the announcement, the stock price jumped approximately 20%. And this is not some struggling startup burning through runway. This is a profitable company with healthy gross margins and strong net income. The revenues aren’t growing as aggressively as they have in past years, but the fundamentals are solid, and they’ve now set a new and extremely ambitious target around gross profit per employee.

Jack Dorsey, the CEO, went out and explicitly said this was because of AI. Not partially, not as a contributing factor, but as the reason. That’s his stated position. He did also acknowledge that they had over-hired to some extent, and we’ll come back to why that matters, but his primary narrative was unambiguous. This is an AI play.

There are people who support that story too. One former employee, Ivan, went on Business Insider with a quote that I think is particularly interesting, where he said he felt the rumblings of AI disruption for a while because he was using it in his own work and noticed the tools getting better and better. And if you scroll through comments on X and LinkedIn, you’ll find people confirming that AI was being used heavily and broadly inside Block, which does lend credibility to the narrative.

One thing I want to address specifically, because I’ve seen it repeated in media, is the claim that it’s mainly support roles being cut. As far as I’ve been able to find, that’s not the full picture at all. I’m not on the inside, so this is admittedly a limited view, but the people I’ve seen affected include data analysts, core engineers, and roles well beyond what you’d typically file under “support.” To what extent those cuts skew one way or the other, I honestly don’t know, but neither do the analysts confidently claiming it’s only support roles being made redundant.

The evidence that this really is AI

So what’s the actual evidence supporting the claim that AI is genuinely driving this?

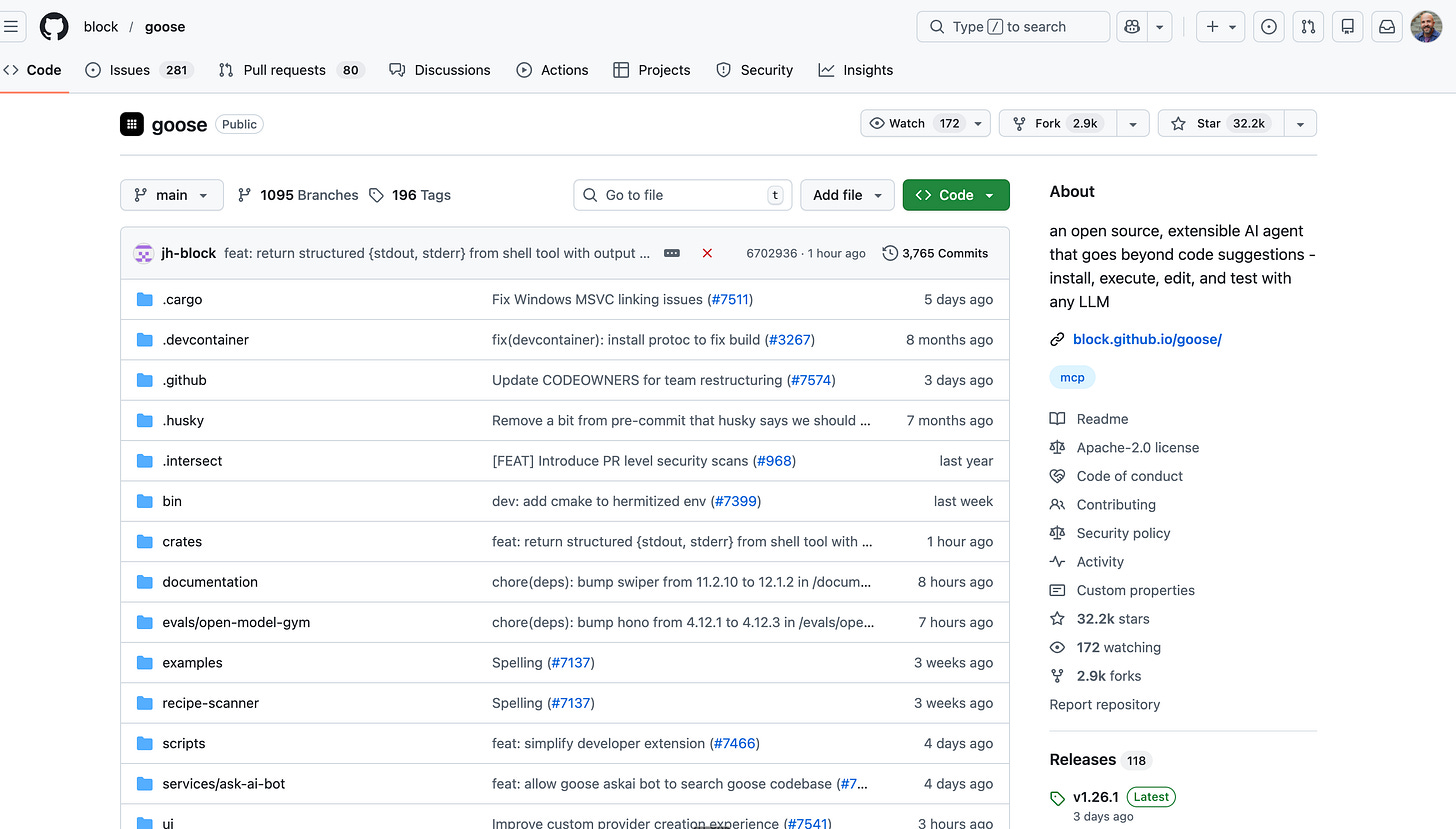

First, they have real tooling. Block built an AI tool called Goose, it’s open source, and they’ve been investing in it and iterating on it for a meaningful amount of time. This isn’t a company that just slapped a ChatGPT wrapper on something and called it a strategy. You can go look at Goose yourself if you’re a reasonably capable engineer, and the CTO has been on multiple podcasts walking through exactly how it works and how it’s been integrated into their development workflows. That’s actually why Block has been on my radar for at least a year. I think it was around May when I first noticed them genuinely doubling down on AI adoption, and I’ve been watching them closely since. Lenny’s Newsletter also did a deep piece on how Block is becoming the most AI-native company out there.

Second, they reorganized around AI before the layoffs happened. That sequence matters, because it means they had already taken deliberate steps to restructure the organization in a way that matches their AI capabilities, which suggests this wasn’t just an overnight decision dressed up in an AI costume.

Third, lots of people internally had been actively working towards integrating AI into their day-to-day work at Block. The numbers being thrown around suggest AI can absorb roughly eight to ten hours of engineering work, which, if you take seriously, represents a significant chunk of what a person does in a given week.

So yes, I think there is a genuinely strong case that AI played a real role here.

The evidence against

But there’s also meaningful evidence pointing the other way.

Dorsey himself went on Twitter and openly admitted that he over-hired during COVID. That is, for me, a pretty clear signal that this isn’t purely an AI story. If you’re openly telling the world you made a structural hiring mistake, the logical first move is to correct that mistake, and you don’t need AI as a justification for doing so.

Then there’s Sam Altman, who on February 19th, just a couple of days before the Block announcement, went out and essentially called the broader trend “AI washing.” He wasn’t specifically talking about Block, but the timing is notable. And here’s what makes that data point interesting to me.

It is very clearly in Sam Altman’s interest, as the CEO of OpenAI, to say the opposite. If he wanted to, he could lean into the narrative that AI is replacing jobs, because that would validate the power of his own product. So when even he says this feels more like washing than reality, I think that carries some weight.

And finally, the market reaction. The stock jumped 20%, and yes, AI was part of the narrative, but I think what the market actually rewarded was the cut itself. Not that the cut was AI-driven, but that Block was doing more with less, tightening the ship, and showing fiscal discipline. Markets have historically loved layoffs regardless of the stated reason, and I don’t think this time is fundamentally different in that regard.

My verdict

So where do I land? I think it’s a combination of both, and I think anyone who tells you it’s purely one or the other is oversimplifying to the point of being wrong.

I think there is genuine, demonstrable impact from the AI tools they’ve built and deployed, and we could see evidence of that well before any of these layoffs were announced. But I also think this was partly a correction of structural over-hiring, partly a PR-savvy way to frame that correction, and partly a play that they knew the market would reward.

As Om Malik put it, the AI narrative and the cost-cutting narrative are deeply intertwined here. Because once you’ve opened the flood gates on this narrative, whether it’s fully accurate or not, a lot of other companies are going to look at that 20% stock bump and start thinking about how they can tell a similar story.

What I take away from all of this

AI can eliminate significant work, and we need to stop pretending otherwise. The biggest gains right now are mostly concentrated in software engineering, and we’ve seen that reflected in GDP value and across a multitude of other indicators. Even Oxford Economics notes that while the evidence of an AI-driven shakeup is still patchy, the direction of travel is clear. If organizations are willing and if they’re set up in a smart way, AI can go in and eliminate real, meaningful portions of work. I’m not saying we should rush to do that, and I’m not saying organizations are ready to absorb those efficiencies without serious thought, but the capability is there and it’s growing fast.

We need to have honest, open conversations about this.

What AI can do today was not possible even a couple of months ago, and the pace of change means we can’t keep pretending this isn’t happening. I’m a bit tired, honestly, of the way this conversation plays out in public, because it’s always one extreme or the other. It’s either “AI is just hype, we’ll be fine, everything is fine” or “AI is going to take everything, it’s already here, panic now.”

And the truth is somewhere in between, but that nuanced middle ground doesn’t get likes, doesn’t get views, and doesn’t get anyone to buy anything. To some extent it feels like peeing your pants. It’s warm and comfortable right now to say AI won’t take any significant part of work, but if we can see on the horizon that it’s becoming a real possibility, we should absolutely not be underestimating the impact.

The limitation lies with the organization, not the technology.

The models are capable. The tools are capable. What holds most companies back is their own organizational readiness, their processes, their culture, and their willingness to genuinely rethink how work gets done.

That’s where the hard work is, and it’s the part nobody wants to talk about because it doesn’t fit neatly into a headline.

The 40% cut was not purely AI, and that distinction matters.

It makes a great headline, of course. But over-emphasizing the AI angle gives people a distorted picture of what’s actually happening, and it sets unrealistic expectations for what AI can automagically accomplish.

This was partly an AI play and partly a correction of structural mistakes, and I suspect Block is far from the only company in that position right now.

Wall Street rewarded this heavily, and that creates a dangerous incentive.

We’ve seen the AI narrative used before, and I think we’ll see it used a lot more going forward. If you’re a CEO watching this unfold and you see the stock price reaction, the temptation to run the same playbook is enormous.

But think hard about what happens next. If this is the narrative you go with, your people are not going to be eager to adopt AI, because they’ve just watched it be used as the justification for firing their colleagues. You might want transformation, but without your people on board, you’re not going to get it.

AI skills are not layoff insurance, and we need to stop pretending they are.

This one is particularly clear from the Block situation. People who were interviewed, people sharing their stories on X and LinkedIn, were actively using AI tools in their work. Some of them were contributing to building AI tools.

And they still got laid off. So the whole mantra of “AI won’t take your job, but someone using AI will” needs to be seriously questioned, if not dismissed entirely.

I’m not saying you shouldn’t be learning and working with AI. You absolutely should. But don’t let anyone sell you the idea that AI proficiency is some kind of bulletproof vest. It wasn’t true here, and I don’t think it will be true going forward either.

For all the leaders out there, the real question is HOW you transform, not IF. Transformation is coming whether you like it or not, and for most organizations it will be necessary.

But how you do it is what actually matters. If you believe your organization will still need people in the future, which I firmly believe it will for the foreseeable future and the next several years at minimum, then the way you approach this transformation is absolutely crucial.

When you start defaulting to “it’s AI, it’s AI” without addressing the underlying issues, which might have nothing to do with AI at all, you’re burning a card you can probably only play once or twice. And when that card is spent, what will you say to your people, your shareholders, your board? Think about that hard.

Think about that thoroughly.

Really appreciate the nuance here...the polarized framing (AI hysteria vs. "it's just hype") misses the actual behavioral story that's already playing out at the individual level. 🤔

We published a study in February with 1,000 US workers on what they're actually doing with AI at work. One thing that speaks directly to your point about the career ladder problem: 22.4% used AI in real time during a live job interview, and 13.6% used it to land the job they currently hold. Nearly 1 in 5 say their professional skills are getting worse since using AI regularly. So at the same time companies are cutting headcount because AI can do the work, workers are quietly eroding the capabilities that would have made them irreplaceable. 😅

The Block story sits at one end of the spectrum. The individual skill atrophy story is the quieter version of the same problem. Both are real simultaneously, which is exactly the middle ground you're describing.

Full data here if useful: novoresume.com/career-blog/ai-at-work-survey